For a few months, I’ve been reading about Ceph and how it works, I love distributed stuff, maybe the reason is that I can have multiple machines and the idea of clustering has always fascinated me. In Ceph, the more the better!

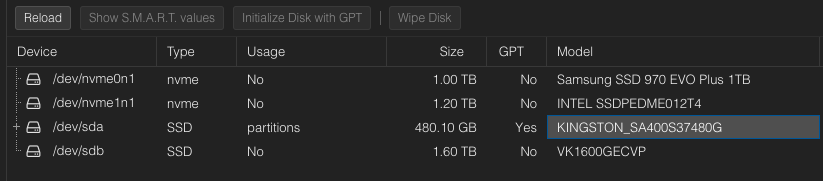

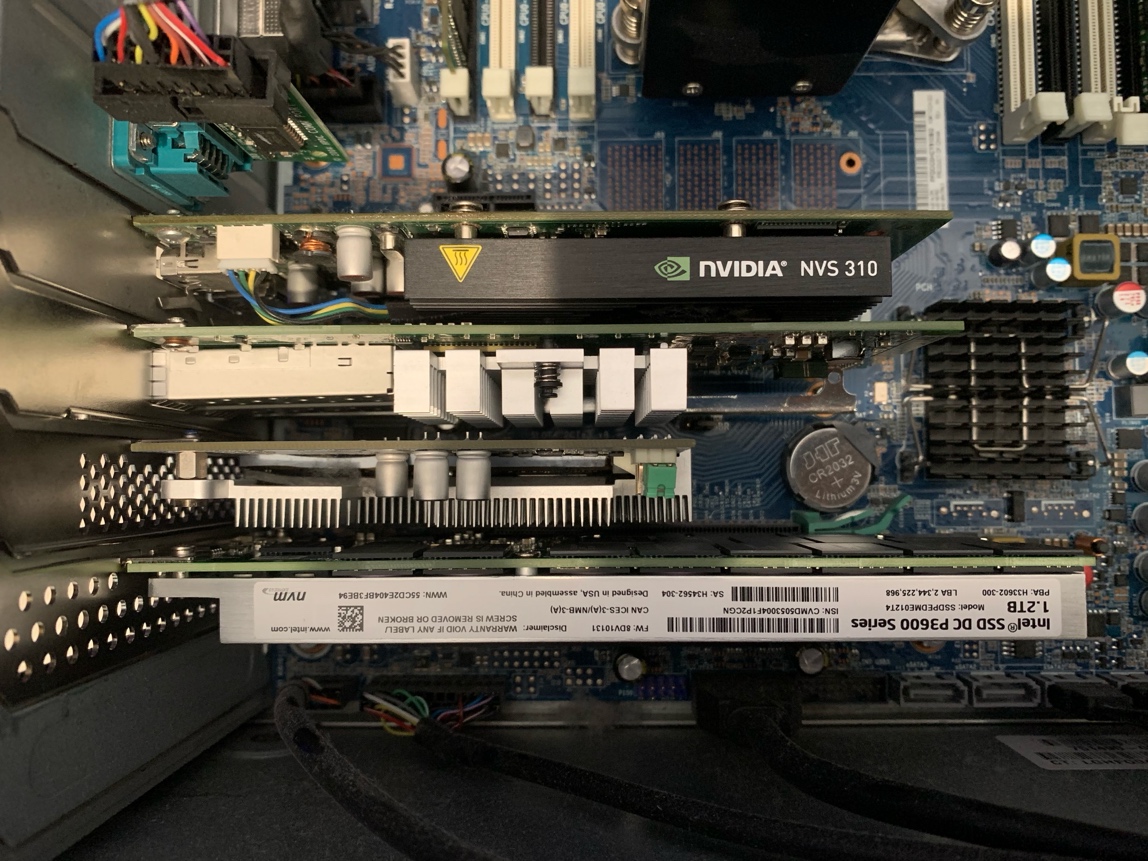

If you have multiple machines with lots of SSD/NVME the Ceph performance will be a lot different than having a 3-node cluster with only one OSD per node. This is my case, and the solution has been working well.

Installing Ceph on Proxmox is just a few clicks away, is already documented in https://pve.proxmox.com/wiki/Deploy_Hyper-Converged_Ceph_Cluster

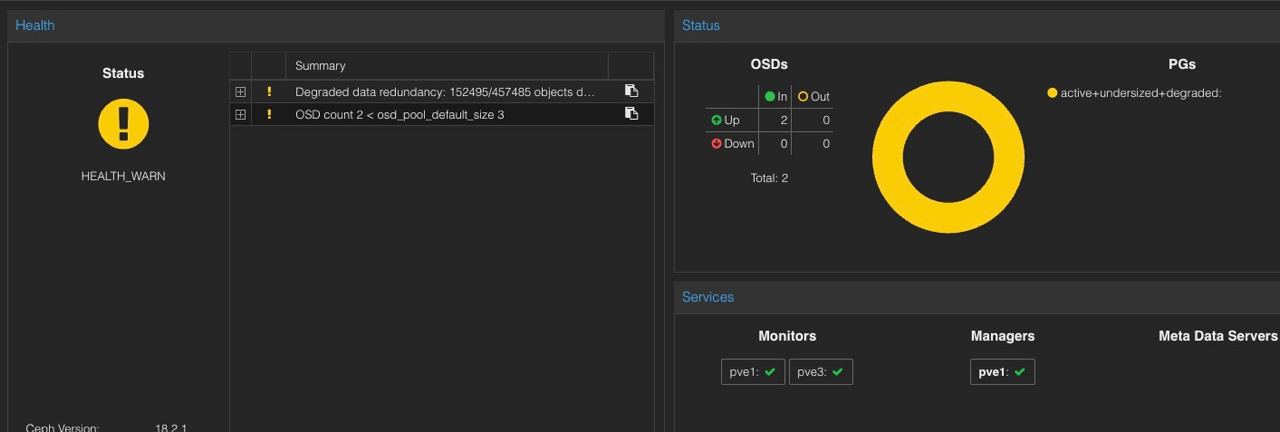

At first, I have two nodes and the state of Ceph was faulty.

The crush_map created by Proxmox is a 3-host configuration, that adds at least one OSD to the cluster, in this picture, there were only 2 hosts with 1 OSD each.